- HOME

- VENUE

- RSVP

- REGISTRY

- CONTACT

- Pes 2011 ps3 cheat

- Penndot center chippewa pa

- Panipat marathi book pdf free download

- Its time to s s s s send me messages

- Nic teaming vmware esxi 5

- Uad plugin bundles

- How to vote in darwin project

- Unison league hack no download or survey

- Download need for speed most wanted ps2 for pc

- Safe kindle drm removal

- Arlong park forum ita

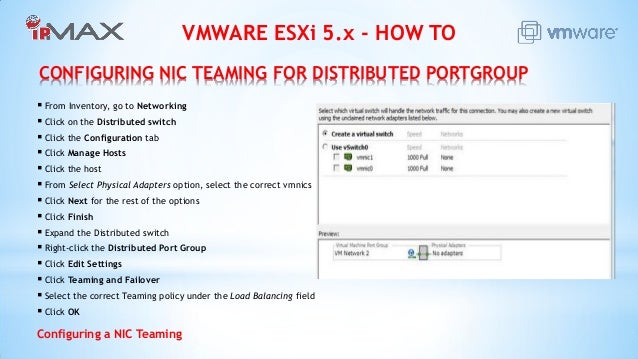

Take a server with 4 pNICs again, with the following pNIC utilisation levels: It’s the only method that’s utilisation aware and actually balances load across pNICs. So, if RX is at 99% and TX is at 1%, average being 50%, RX is above the 75% threshold, therefore, the pNIC is marked as saturated. Once a pNIC becomes 75% utilised for 30s then there will be a calculation run, and in a sort-of “network-DRS” kind of way, the connections will be shuffled around to try and create a more balanced pNIC load. Initial placement of VMs uses the exact same calculation as Virtual Port ID. This is the only option that is utilisation aware, it also requires no special switch configuration (same as Virtual Port ID/MAC Hash).

Cons #Īgain, at the mercy of a very busy client + server connection loading up one pNIC, harder to configure, requires specific physical switch and vDS config, doesn’t “just work”. Therefore theoretically more than single pNIC throughput to one guest from multiple clients if connections are distributed across pNICs. So, as you can see, even connections to the same VM, but from different clients will get distributed across pNICs.

#Nic teaming vmware esxi 5 mod#

Let’s look at a practical example (Again, assuming host with 4 pNICs):Ĭlient1 -> VM1 = ( 201 mod 4 ) = 1 = vmnic1Ĭlient1 -> VM2 = ( 200 mod 4 ) = 0 = vmnic0Ĭlient2 -> VM1 = ( 202 mod 4 ) = 2 = vmnic2Ĭlient3 -> VM1 = ( 203 mod 4 ) = 3 = vmnic3 Obviously, the guest and client IP remain the same throughout that particular communication, so that connection will stay pinned to its calculated pNIC, if a pNIC goes down, re-calc is run across the remaining live pNICs, pretty neat.

This ensures (roughly) that each connection between a single source and different destinations are distributed across the pNICs. It will make a hash of source and destination IP addresses, then run an bitwise xor and modulus on those based on the number of pNICs in the server, so if it’s 4 like the above example, it will run (hex1 xor hex2) mod (4). This method will balance connections based on source and destination IP addresses across pNICs so single guests communicating with clients on different IP addresses will be distributed across pNICs.

Your physical switches that the hosts uplink to are stacked, or have stacking-like technologies (like Cisco vPC), if you have a single switch this will also work, or if you just have a pair of Dell 8024F/Cisco 3750-X that are stacked and create a LAG/Port Channel, and replicate the config on your vDS, that will also work. So the workloads are pretty-evenly balanced, but again we are at the mercy of vNIC placement not actual load on the pNIC.